本文详细介绍了 Google DeepMind 与包括皮克斯前员工 Connie He 在内的专业动画师之间的合作项目,共同制作了名为《亲爱的楼上邻居》的动画短片。核心目标是探索如何将 Veo 和 Imagen 等生成式 AI 工具集成到高端创意工作流中,同时不牺牲艺术意图。团队在保持风格一致性和精确动作控制方面面临挑战,而传统的 Text-to-Video 模型难以解决这些问题。为此,研究人员开发了针对视觉概念的自定义微调技术以及新型 Video-to-Video 工作流,允许动画师使用粗略草图或“表演”作为 AI 生成的输入。该过程强调迭代优化、局部编辑和 4K 上采样,将 AI 视为合作伙伴而非“一键式”解决方案。这种伙伴关系让艺术家能够突破创意边界,同时也为研究人员提供了关于专业电影制作人需求的实际见解。

Today, our animated short film, “Dear Upstairs Neighbors,” previews at the Sundance Film Festival. The film will be showcased at the Sundance Institute’s Story Forum, a space focused on artist-first tools and technologies supporting visual storytelling.

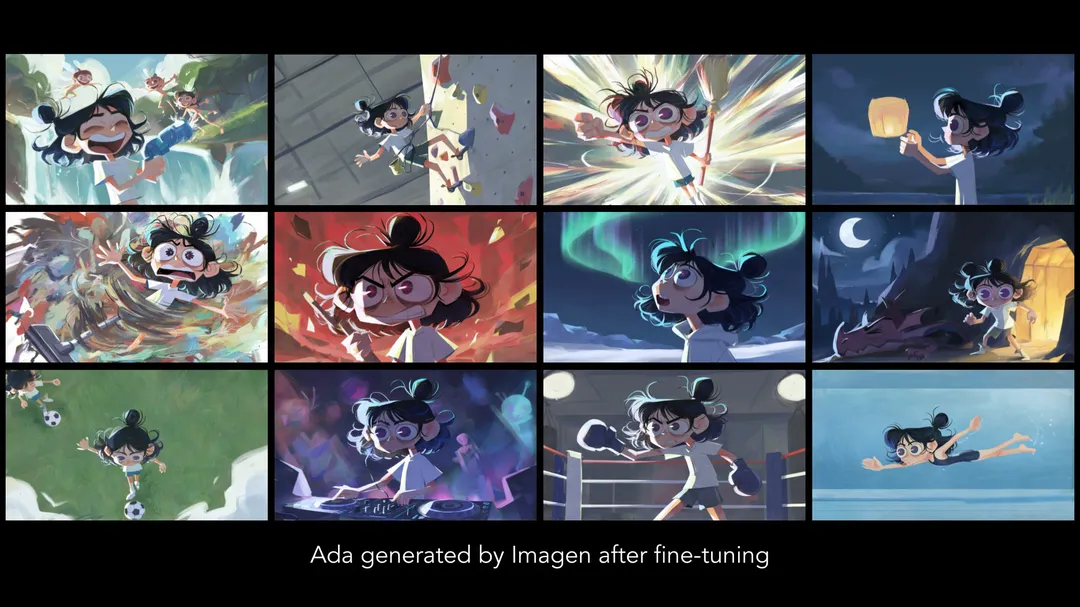

“Dear Upstairs Neighbors” is the story of a young woman, Ada, who is desperate for a good night’s sleep but kept awake by her exceedingly noisy neighbors. As she struggles to imagine what could be causing the cacophony upstairs, reality drifts into fantasy, and an epic battle for peace and sanity ensues.

The film is a collaboration between animation veterans, including director and Pixar alum Connie He, and researchers at Google DeepMind, united by a shared goal of exploring how generative tools might fit in with artists' creative processes.

From the start, the team aspired to empower animation artists to benefit from the creative potential of generative AI without sacrificing artistic control to its inherent unpredictability. To define her vision for this film, Connie developed the storyboards, and enlisted award-winning production designer Yingzong Xin to create concept art and character designs. We committed to staying faithful to this artistic vision throughout shot production.

The expressionistic visual styles are central to the storytelling — and extremely difficult to achieve in traditional animation. We expected that AI could help fill the gap, but soon found that these styles were so unique, and our design choices so specific, that our researchers would have to develop new capabilities to provide the customization and control that we needed to bring the film to life.

Tune for new visual styles

Our first challenge was to produce shots consistent with Ada’s character design and the painterly styles that defined each scene. To achieve high quality and consistency, our researchers built tools that allowed our artists to fine-tune custom Veo and Imagen models on their artwork, teaching the models new visual concepts from just a few example images.

Show, don’t type

Another challenge was precisely controlling the content and motion of each shot. We knew that text prompting alone would never let us control the rhythm of Ada’s sleepy fingers typing, the comedic timing of her facial expressions, or the exact framing of a camera reveal. We needed a way to communicate that level of nuance and specificity to our AI models. Our researchers drew inspiration from how our animators communicate visually, by drawing, painting or acting out scenes. We developed novel video-to-video workflows, which allowed our animators to convey their intentions visually by creating rough animation in their tool of choice. Our models then transformed that animation into fully stylized videos that follow the input motion, with an adjustable balance between tight control and creative improvisation.

Iterate toward perfection

Even with the control provided by fine-tuning and video-to-video workflows, none of our final shots were created in a single “one-click” generation. Just as in any film production, we critiqued each shot in our “dailies” reviews, going through several rounds of feedback to get every detail right. To iterate on a shot without re-generating from scratch every time, we built tools for localized refinement, allowing us to edit specific regions of a video with an adjustable level of control.

Finally, to prepare our film for the big screen, we used Veo's upscaling capability to bring our final shots to 4K resolution. Guided by our artists' critique, our researchers carefully tuned the model's behavior to add rich detail that preserved every nuance of the artistic style. The Veo 4K upscaling model is available in Flow and coming to Google AI Studio and Vertex AI later this month to meet the real-world needs of filmmakers.

Each shot presented unique challenges, and over the course of production, our multi-disciplinary team developed several workflows combining the precise control of hand-crafted animation with the stylistic flexibility and scalability of generative AI. Not only did our AI models produce hilarious bloopers, they often surprised us with unexpectedly beautiful and creative solutions. We learned valuable lessons from coming together every day to produce each shot with fine-grained artistic intention and care. Our artists found new creative powers through direct access to experimental research, and used their craft and perspective to help shape its development. Our researchers gained hands-on experience as technical artists, rapidly prototyping solutions to break through artistic and technological barriers. We’re excited to continue our mission to build generative AI with and for professional artists and filmmakers.